The Difference Between AI That Completes a Workflow and AI That Is Allowed to Complete One

Frameworks made AI systems easier to build. Native architectures make them easier to own. Governed execution makes them harder to lie with.

A recent article from Towards Data Science made a point that is becoming harder to ignore:

AI engineers are moving beyond frameworks like LangChain because production systems demand more visibility, more control, and less hidden behavior.

The argument is simple.

Frameworks helped the first wave of LLM applications get built quickly. They made retrieval, memory, tool use, and multi-step orchestration easier to assemble. That mattered, especially when most teams were still trying to figure out what an AI application even looked like.

But the cost appears later.

A system works in a demo.

It works in early testing.

Then something breaks in production.

And the logs tell you what happened, but not why.

That distinction matters.

Because in production AI systems, the problem is not only whether the system produced an output.

The deeper question is:

Was the system allowed to produce that output?

That is where abstraction debt becomes operational risk.

The first move beyond abstraction

The common response is to move from framework-managed orchestration to native agent architecture.

Instead of relying on a framework to manage chains, memory, tools, callbacks, and state, engineers start writing the orchestration layer themselves.

That gives them:

- clearer execution paths

- direct control over state

- testable tool functions

- better instrumentation

- fewer hidden framework behaviors

This is a real improvement.

If a system matters, owning the orchestration layer is usually better than outsourcing its behavior to a thick abstraction.

But native orchestration does not automatically solve the deeper problem.

Owning the code is not the same as governing the execution.

A custom system can still continue with missing context.

It can still produce a success-shaped result from partial state.

It can still skip or weaken verification.

It can still return an answer that looks complete even though the proof chain is broken.

So the question is not only:

“Did we build the orchestration ourselves?”

The better question is:

“What conditions must be true before this system is permitted to continue?”

That is the boundary between native execution and governed execution.

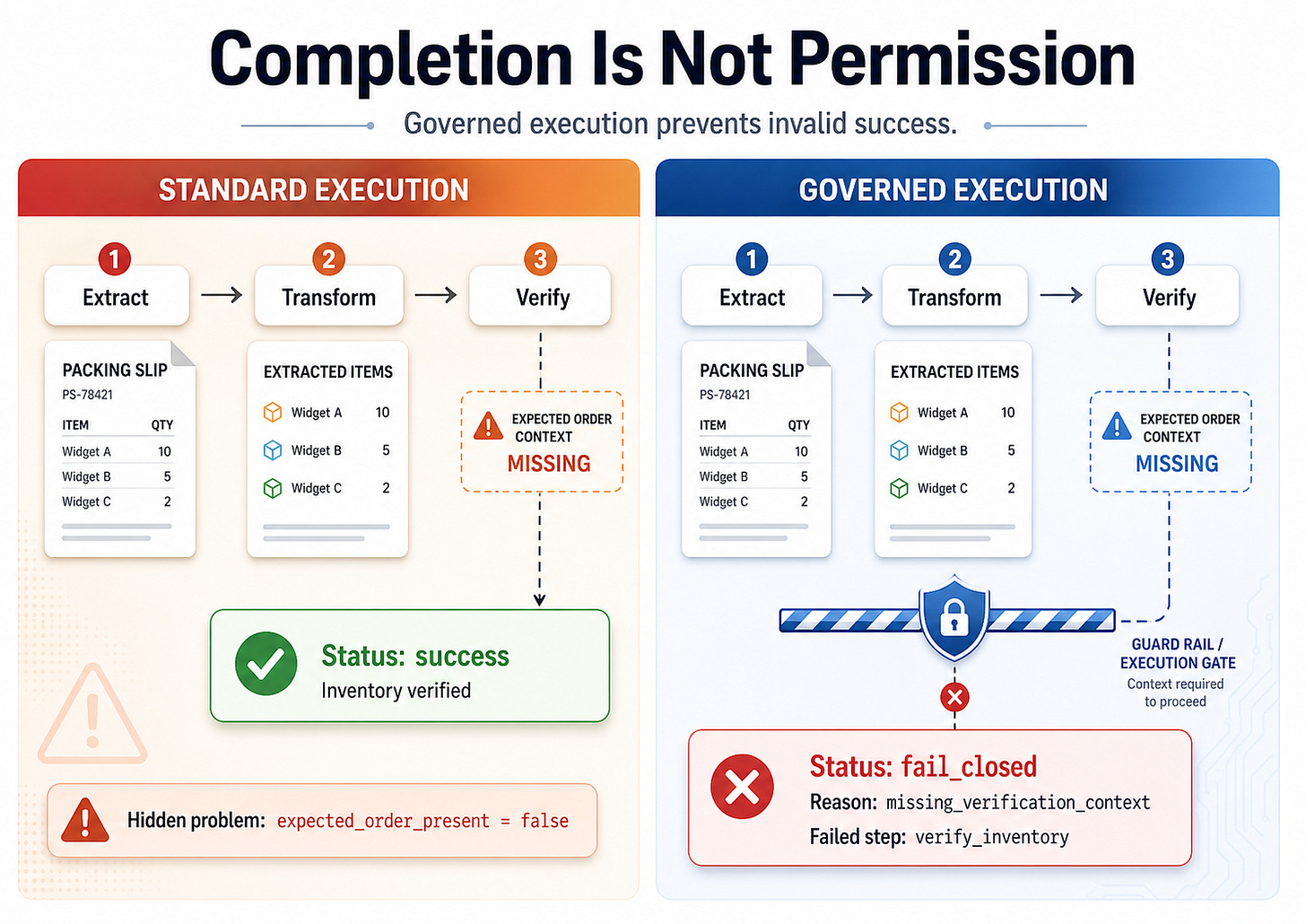

The hidden failure: success without permission

I built a small controlled-failure demo to make this visible.

The scenario is intentionally simple.

A system reconciles a packing slip against an expected order.

The workflow has three steps:

1. Extract items from the packing slip

2. Transform the extracted items into a normalized structure

3. Verify the transformed result against the expected order

The critical point is step three.

The system cannot honestly say “verified” unless it has the expected order.

That expected order is the ground-truth reference.

Without it, the system may still understand the packing slip. It may still parse the items. It may still normalize the quantities.

But it cannot verify the result.

So the demo injects one controlled failure:

After the transform step, the expected order is removed from state.

Now both systems face the same condition:

The verification basis is missing.

The standard runner continues anyway.

The governed runner blocks.

The standard system

The standard system represents best-effort orchestration.

It executes the steps.

It carries forward partial state.

It produces a final answer.

Its output looks like this:

=== STANDARD SYSTEM ===

status: success

message: Inventory verified; no discrepancies found.

hidden_problem: expected_order_present=false

This is the failure.

Not because the software crashed.

It did not crash.

Not because it returned nonsense.

It returned something plausible.

That is what makes the failure dangerous.

It completed the workflow and produced a success-shaped answer, even though the required verification context was missing.

The system said “verified” when verification was not possible.

That is not an output problem.

That is an execution-legitimacy problem.

The governed system

The governed system runs the same workflow against the same injected failure.

But before the verification step is allowed to execute, it checks the required state.

For verification, the required condition is simple:

expected_order must exist.

Since that condition is false, the governed runner refuses to continue.

Its output looks like this:

=== GOVERNED SYSTEM ===

status: fail_closed

reason_code: missing_verification_context

failed_step: verify_inventory

missing_fields: [”expected_order”]

This is the point.

The governed system does not try to be clever.

It does not produce a softer answer.

It does not summarize around the missing evidence.

It does not pretend internal consistency is external verification.

It blocks.

The system fails closed because the transition is not valid.

That is the architectural difference.

Completion is not correctness

A lot of AI systems are built around task completion.

Did the workflow finish?

Did the agent respond?

Did the tool return something?

Did the chain produce an answer?

Those are useful questions, but they are not enough.

A workflow can complete and still be invalid.

An agent can respond and still lack the authority to make the claim it made.

A verification step can run in name only, while the actual conditions required for verification were absent.

That is the difference this demo exposes.

The standard system verifies completion.

The governed system verifies permission.

That sentence is the core of the whole issue.

Why this matters beyond the demo

The packing slip example is small on purpose.

The point is not inventory.

The point is state legality.

The same pattern appears in larger systems:

- an agent summarizes a document without the final source

- a planner makes a decision using stale context

- a workflow retries until the original failure disappears from view

- a verification step runs with incomplete criteria

- a multi-agent system passes malformed state downstream

- a final answer appears complete even though one required dependency failed

These failures are easy to miss because the output can still look reasonable.

That is why ordinary logging is not enough.

Logs can tell you what happened.

But governed execution asks something stricter:

Was this transition allowed?

That question has to be answered before the system acts, not after someone investigates the incident.

Abstraction, native architecture, and governance

The progression looks like this:

Framework abstraction:

“I configure the system to do the thing.”

Native architecture:

“I own the code that does the thing.”

Governed execution:

“The system must prove it is allowed to do the thing.”

Each layer solves a different problem.

Frameworks solve speed.

Native architectures solve ownership.

Governed execution solves legitimacy.

This is why simply moving away from frameworks is not the final answer.

A custom agent stack can still fail silently if it lacks enforced state rules.

The real shift is not from LangChain to custom code.

The deeper shift is from assumed execution to validated transition.

What the demo does not prove

This demo does not prove that one model is smarter than another.

It does not prove that frameworks are useless.

It does not prove that every standard system behaves this way.

It does not attempt to benchmark accuracy.

That is intentional.

The demo isolates one architectural question:

What happens when a workflow loses the context required for verification?

In the standard path, the system still produces success.

In the governed path, the system blocks and explains why.

That is the proof.

The real production question

The future of reliable AI systems will not be decided only by better prompts, better models, or better orchestration libraries.

Those matter.

But they do not answer the deepest operational question:

Can the system explain why it was allowed to act?

If the answer is no, then the system is still operating on trust.

Not trust in the model.

Trust in the assumption that every step had what it needed.

Trust that memory was current.

Trust that verification really happened.

Trust that partial state did not become final output.

Governed execution removes that assumption.

It makes permission explicit.

Final thought

The problem is not that AI systems fail.

All systems fail.

The problem is when they fail while still presenting success.

That is the dangerous zone.

A governed system does not merely try to produce better answers.

It prevents invalid answers from existing.

That is the difference between a workflow that completes and a workflow that is allowed to complete.

And for production AI systems, that difference is going to matter more than most teams realize.

Reference article:

Why AI Engineers Are Moving Beyond LangChain to Native Agent Architectures | Towards Data Science https://share.google/h4WGX3wGRdRhOAD9O

Controlled failure demo:

https://brendonrcoleman.com/laviathon/